You Don't Need an AI Hire. You Need an AI Champion.

The instinct makes sense. Hire someone younger who gets AI. Have them sit next to the person who knows the work. Let them figure it out.

It won't work. Here's why.

A business owner in a session I co-hosted came in with a plan.

He runs a service business. Sharp guy. His construction accountant is good at her job. He doesn't understand her work and doesn't need to. His plan: hire a college student at a thousand dollars a week, have them sit next to her, figure out how AI could make her more efficient. He'd already done the ROI math in his head.

He had the right idea. He saw the bottleneck, he did the ROI math, he was ready to move. He was just wrong about who should do the work.

The Correction Came From Someone Else in the Room

Another business owner cut in -- not me, not my co-host. A peer.

"It'll give you an advantage. But hiring a kid -- you won't know what to tell them to work on. You know what your business needs better than anybody."

That's the line that matters. Not because it's surprising, but because of who said it.

Not a consultant. Not someone with a service to sell. Someone in the same seat.

Here's the trap he saw: if you hire someone who doesn't know the work to implement the tech, you're just adding another layer of management. You're now managing a kid who's trying to manage a process he doesn't understand. That's $4,000 a month -- $1,000 a week, four weeks -- before you've changed a single process.

The Matrix Problem

My co-host put it this way: when you're a subject matter expert watching AI work through your domain -- accounting, legal, operations, doesn't matter -- you immediately know when something is wrong. You can feel it before you can name it.

Someone else watching the same screen? It's a wall of scrolling text. Impressive-looking. Meaningless to them.

The kid can learn to use the tools. He cannot catch the mistake in work he doesn't understand.

Your construction accountant has been doing this for years. She knows when the number doesn't look right. She knows which edge cases matter and which don't. She knows which reports take six hours that should take six minutes.

She needs to be the one watching the screen. Not as a spectator. As the person whose judgment the whole system depends on.

That's not a junior hire. That's someone you already have.

What an AI Champion Actually Is

Here's what I keep seeing every time this works.

The company finds the person who knows a specific workflow cold. Not the most tech-savvy person on the team. Often not even close. The person who knows the work best.

They give that person time and permission to experiment. They pair them with someone who can build what that person can judge. And then they protect the feedback loop -- the champion's ability to say "that's wrong" is the whole system.

McKinsey put a name to it -- "AI translators." Their 2025 State of AI report found that high-performing organizations are 3x more likely to have one actively championing AI. Different label. Same model.

Another founder -- he runs a plumbing company -- was already doing this. He wasn't hiring anyone. He was enlisting his existing SME. His framing: "You gotta break the seal. Once you break the seal, it changes."

That's it. One person. One workflow. An outside partner who can build what that person can judge. Neither half works without the other.

The outside partner can't do this alone -- they don't know which output is wrong. The inside champion can't do it alone -- they don't have the build skills to harden what works.

The Gap Most Businesses Are Stuck In

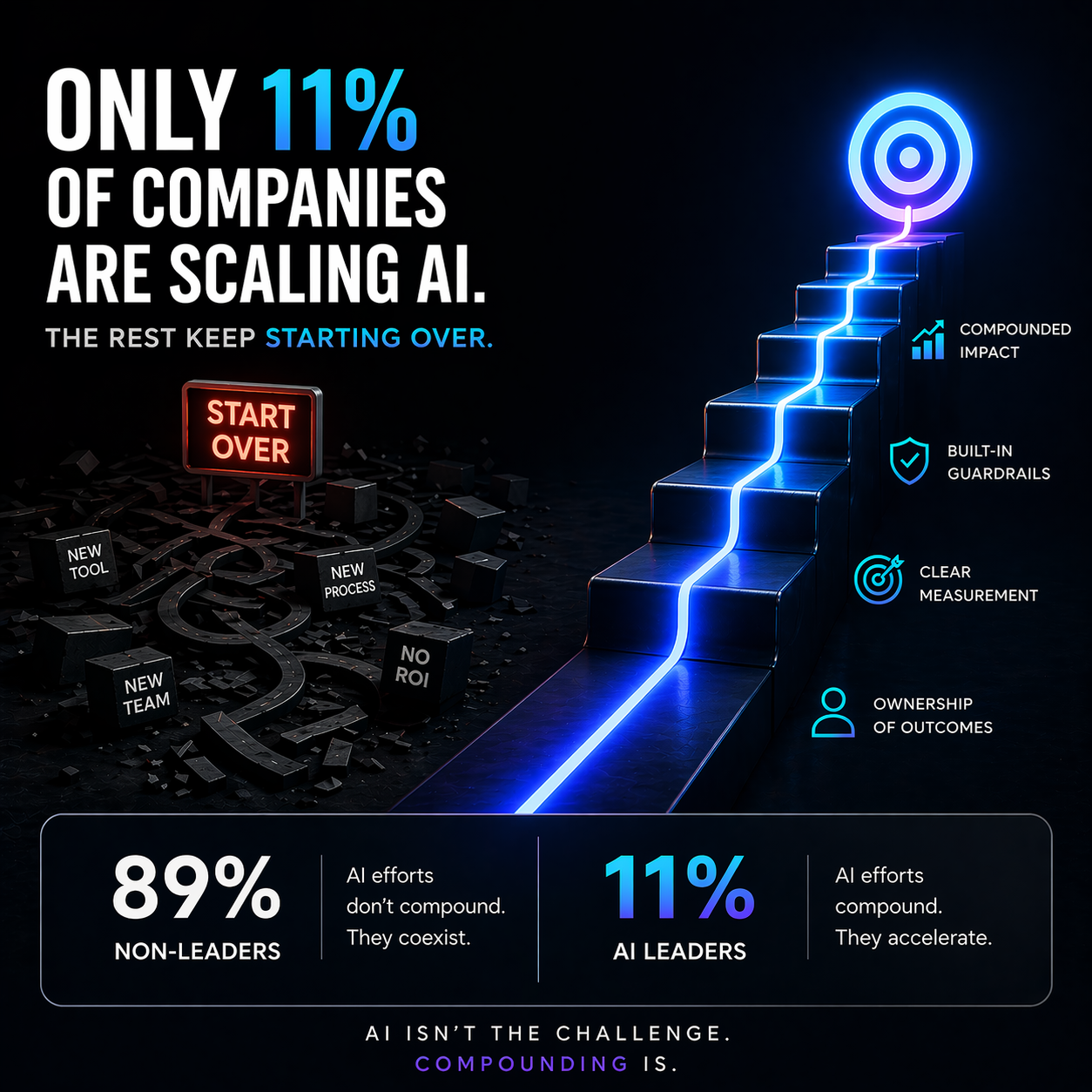

The gap has a number. Goldman Sachs put it on paper last March -- 10,000 small business owners. 93% of AI-using businesses say it's had a positive impact. Only 14% have fully integrated it into core operations. (Goldman Sachs 10,000 Small Businesses, March 2026)

79 points between "it helps" and "it's embedded in how we run."

That gap has a name. It's the absent Champion.

The business tried something. It worked. Nobody owns it. Nobody teaches the team. Nobody builds the next thing on top of it. Six months later they have four AI "projects" and no AI muscle.

The Champion is the missing piece. Not because they're technical. Because they know the work well enough to say what good looks like.

He Got There

At the end of the session -- two-plus hours in, same room, same people -- the same exec came back to it.

"If I hire this kid, can I hire you to teach him? Help him be my champion?"

He got there. Not through persuasion. Through the conversation. Watching other owners work through it.

The model is that simple once you've seen it working.

The Question to Answer Before You Post a Job

Who in your company already knows the workflow you most need to fix?

Not "who's excited about AI." Not "who's most technical." Who knows the work so well that they'd catch a mistake in three seconds that would take anyone else an hour to find?

That's your starting point. One person. One workflow. If you missed the earlier post on why your domain expertise is worth more than any AI prompt, this is the practical application of that idea.

If you need the outside-partner half of that equation -- someone who can build what your Champion can judge -- that's exactly what we do at JOV AI.

This is the fourth post in a series about what I learned co-hosting a 140-minute AI session with a group of business owners.