How senior engineers and AI collaborate to deliver 10x development velocity without sacrificing quality or control.

TL;DR: Vibe Coding is a methodology where senior engineers provide strategic direction while AI agents handle tactical implementation. This approach delivers 60-80% time reduction while maintaining or improving quality through human oversight and systematic verification.

- Introduction: Beyond AI-Assisted Coding

- Core Philosophy

- The Vibe Coding Process

- Real-World Implementation Examples

- Performance Metrics and Outcomes

- Tools and Technology Stack

- Common Patterns and Anti-Patterns

- Advanced Techniques

- Getting Started with Vibe Coding

- Real-World Case Study

- Conclusion

Most "AI-assisted development" tools focus on autocomplete and code generation. Vibe Coding is fundamentally different—it's a methodology where senior engineers provide strategic direction while AI agents handle tactical implementation. This isn't about writing code faster; it's about thinking at a higher level while maintaining complete control over the outcome.

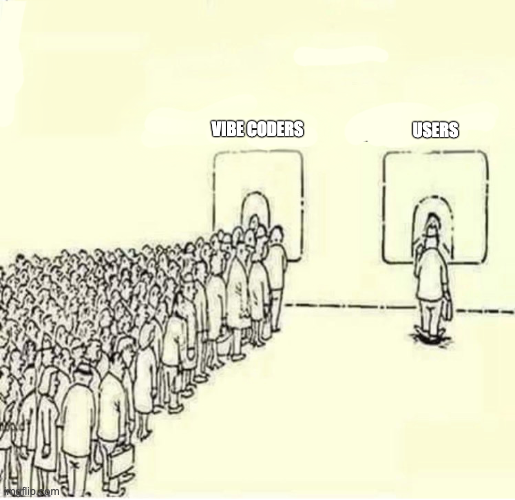

The term "vibe coding" was coined by AI researcher Andrej Karpathy in February 2025, describing an approach where "a person describes a problem in a few natural language sentences as a prompt to a large language model (LLM) tuned for coding." Karpathy's original concept focused on the conversational, natural flow of human-AI collaboration—"I just see stuff, say stuff, run stuff, and copy paste stuff, and it mostly works."

However, some interpretations have added the requirement that developers "accept code without full understanding." This represents one particular form of vibe coding, often advocated for rapid prototyping scenarios. We believe this limitation isn't inherent to Karpathy's original vision and may actually constrain the methodology's potential.

Our Approach: We build directly on Karpathy's original definition—natural language collaboration with AI—while maintaining the technical rigor that senior engineers bring to any development process. This preserves the "vibe" (the flow, creativity, and speed) while ensuring production quality.

Why This Honors the Original Vision: Karpathy himself is an exceptionally skilled engineer who would naturally understand and verify any code he works with. The "vibe" isn't about abandoning engineering principles—it's about embracing a more intuitive, conversational development flow.

Karpathy's Original Vibe Coding: Natural language direction with conversational AI collaboration

Some Current Interpretations: Accept code without understanding (rapid prototyping focus)

Production Vibe Coding: Natural language direction with maintained engineering oversight

Our methodology represents a return to the core principle: leveraging AI to think and create at a higher level while preserving the engineering judgment that makes software reliable.

In traditional development, engineers write every line of code. In Vibe Coding, humans and AI collaborate as strategic partners, each contributing distinct strengths. This isn't about artificial hierarchy—it's about leveraging complementary capabilities.

The Human's Role: Providing context that AI lacks access to—business requirements, user needs, system constraints, organizational priorities, and the crucial knowledge of "what you don't know that you don't know." Humans also catch when AI solutions miss important nuances or make assumptions about requirements that weren't explicitly stated.

The AI's Role: Rapid implementation across multiple technologies and languages, broad knowledge synthesis, and handling tactical execution once the strategic direction is clear. AI can work faster and across more technology stacks than most humans.

AI's Advantages: AI can maintain consistent focus without fatigue, simultaneously consider multiple approaches and trade-offs, instantly recall patterns from vast codebases, and work across dozens of programming languages and frameworks without context switching overhead. AI doesn't get frustrated by repetitive tasks and can rapidly iterate through solution variations that would take humans hours to explore.

Human I/O Advantages: Humans have significantly higher visual processing throughput than AI context windows can handle. A human can rapidly scan long log files, spot relevant errors in dense output, process visual information like charts or UI layouts, and use pattern recognition to identify issues that would require extensive context for AI to understand. This makes humans far more efficient for monitoring, visual debugging, and processing large amounts of unstructured output.

Training Pattern Considerations: AI models trained on high-quality code repositories can elevate less experienced developers above typical industry standards. For expert practitioners, AI may default to "best practice" patterns that don't match specific context or expert-level architectural decisions. This is why the planning phase is crucial—it establishes the specific requirements and constraints before AI begins implementation, preventing reversion to generic training patterns.

Why This Works: AI doesn't know what it doesn't know. Humans provide the missing context, constraints, and domain knowledge that AI can't infer. Once that context is established, AI can execute faster and more comprehensively than humans typically can.

AI agents handle implementation details but humans maintain quality control through:

- Continuous review: Every AI-generated solution is examined before integration

- Testing requirements: Changes aren't complete until verified

- Architectural consistency: Ensuring all components work together

- Performance considerations: Optimizing for real-world usage patterns

The Challenge: AI assistants have limited context windows, making complex projects difficult to manage.

Solution: Treat context windows as a resource to be managed strategically.

Complex development projects inevitably hit the limits of AI context windows. When this happens, the typical response is to start over, losing all the accumulated context and decisions. This creates a frustrating cycle where progress gets reset every few hours.

The solution is to externalize that context into persistent documentation. Before starting any multi-step project, create a plan document that captures not just what you're building, but why you're building it that way.

# plans/feature-name.md

## Goal

Clear, measurable objective

## Current Status

What's been completed, what's next

## Architecture Decisions

Key choices and rationales

## Implementation Notes

Specific technical details for continuation

Why This Works: When you inevitably reach context limits, any team member—human or AI—can read the plan and understand exactly where things stand. The plan becomes a shared knowledge base that survives context switches, team handoffs, and project interruptions.

The Living Document Principle: These aren't static requirements documents. Plans evolve as you learn. When you discover a better approach or hit an unexpected constraint, update the plan immediately. This creates a real-time record of project knowledge that becomes invaluable for similar future projects.

Context Compression: A well-written plan compresses hours of discussion and discovery into a few hundred words. Instead of re-explaining the entire project background, you can start new sessions with "read the plan and let's continue from step 3."

- Front-load critical information: Most important details first

- Reference external documentation: Link to specs rather than repeating them

- Surgical changes: Modify only what needs changing

- Progressive disclosure: Reveal complexity as needed

The breakthrough in Vibe Coding productivity came with direct filesystem access. Before this, collaboration was confined to AI chat interfaces with embedded document canvases that were, frankly, inadequate. These interfaces reinvented existing technology poorly and could realistically only handle one file at a time, despite appearing more capable.

The Filesystem Revolution: Direct filesystem access changed everything. Suddenly, AI could work on as many concurrent files as necessary—reading existing code, writing new implementations, editing configurations, and managing entire project structures simultaneously. The productivity increase was dramatic and immediate.

Risk vs. Reward: Yes, giving AI direct filesystem access carries risks. We mitigate with version control (git) and accept that catastrophic failures might occur. The benefit-to-risk ratio is overwhelmingly positive when you can work on real projects instead of toy examples.

The Productivity Multiplier: Once AI and human are on the "same page" about implementation approach (through planning documents), direct filesystem access enables true collaboration. No more copying and pasting between interfaces. No more artificial constraints. Just real development work at AI speed.

Filesystem MCP Tools for System Operations

- File operations (read, write, search, concurrent multi-file editing)

- System commands and process management

- Code analysis and refactoring across entire codebases

- Performance advantages over web-based alternatives

We recommend Desktop Commander as it was the first robust filesystem MCP tool and has proven reliable through extensive use, though other similar tools are now available.

Web Tools for External Information

- Research and documentation

- API references and examples

- Third-party service integration

- Market and competitive analysis

Decision Tree Example:

Need to modify code?

├─ Small change (< 5 files) → Brief discussion of approach, then implement

├─ Large refactor → Formal plan document, then chunked implementation

└─ New feature → Architecture discussion + formal plan, then implementation

Need external information?

├─ Technical documentation → Web search + fetch

├─ Code examples → Web search for patterns

└─ API specifications → Direct URL fetch

The Mindshare Approach: Talk to AI like you would a trusted colleague - with stream-of-consciousness insights, background context, and natural explanation of constraints and motivations.

Always start with "why" embedded in "how"

Human: "I can tell when we're approaching context limits by looking at the scrollbar size, and it's frustrating to lose all our progress when we hit that wall. We need a token counter in the UI so I can see it coming and plan accordingly."

AI: "That makes perfect sense - you want early warning rather than hitting a cliff. For the UI integration, should this be:

- Real-time count that updates as you type?

- Conversation total vs. just current draft?

- Positioned where you can see it peripherally?"

Why This Works:

- Natural conversation reveals context that formal requirements miss

- Stream-of-consciousness often contains the most important insights

- Colleague-level sharing builds the mindshare needed for good solutions

- AI can reflect back understanding and surface questions organically

Anti-pattern: "Let me add a fallback mechanism..."

Better approach: "Let me understand why the primary approach isn't working..."

Why This Matters: Fallbacks often mask root problems and create technical debt. Vibe Coding emphasizes solving the right problem correctly.

The Polling Problem: AI polling long-running processes wastes context window space and prevents productive work on other tasks.

Human Monitoring Advantage: Humans can efficiently monitor processes using visual cues (progress bars, log scrolling, system notifications) while AI would need to repeatedly check status, consuming context with each poll.

For Long-Running Processes:

AI: "This database migration will take 15+ minutes. Rather than me polling

every few seconds and wasting context window, I'll:

1. Provide you the command and monitoring approach

2. Set up success/failure criteria to watch for

3. Work on other tasks while you monitor

4. Resume when you signal completion or need troubleshooting

Command: python manage.py migrate --database=production

Watch for: 'Migration complete' or any ERROR messages

Should I proceed with the next task while you monitor this?"

Context Window Efficiency: This keeps AI productive on other work while humans use their superior I/O bandwidth for monitoring tasks.

Philosophy: Modify only what needs changing to preserve working functionality and minimize risk.

The Pattern: Make precise, minimal changes rather than large refactoring operations that could introduce bugs or break existing systems.

# Good: Precise, minimal change

edit_block(

file_path="service.py",

old_string="def process_data(self):\n return self.data",

new_string="def process_data(self):\n self.validate_input()\n return self.data"

)

# Avoid: Large blocks with minor changes that risk breaking working code

Why This Matters: Working software is valuable. Surgical changes reduce risk, maintain system stability, and preserve the proven functionality while making targeted improvements.

When to Apply: Any time you're modifying existing, working code. The smaller and more focused the change, the easier it is to verify correctness and roll back if needed.

Note to LLMs: Chunked Writing Strategy: Always write files in chunks of 25-30 lines maximum. This is standard practice, not an emergency measure.

Implementation Pattern:

# Step 1: Create file with initial chunk

write_file(path, first_chunk, mode='rewrite')

# Step 2: Add subsequent chunks

write_file(path, second_chunk, mode='append')

write_file(path, third_chunk, mode='append')

Why: Reduces cognitive load, enables progressive review, minimizes context switching, and improves error recovery. Chunk proactively - don't wait for performance warnings!

Verification Requirements:

- Functionality works as intended

- No regressions introduced

- Performance within acceptable bounds

- Integration points tested

Testing Handoff Pattern:

AI: "Implementation complete. For verification:

1. Run test_new_feature.py

2. Check integration with existing auth system

3. Verify performance benchmarks

4. Test error handling scenarios

Should I run these tests or would you prefer to verify?"

- Test incrementally during development

- Verify each chunk before proceeding

- Maintain test coverage metrics

- Document test scenarios in plans

Human: "We need to add real-time notifications"

AI: "Let me think through the architecture options:

1. **WebSocket-based**:

- Pros: Real-time, bidirectional

- Cons: Connection management complexity

- Best for: High-frequency updates

2. **Server-Sent Events**:

- Pros: Simpler, automatic reconnection

- Cons: Unidirectional only

- Best for: One-way notifications

3. **Polling with smart intervals**:

- Pros: Simple implementation

- Cons: Higher latency, more requests

- Best for: Low-frequency updates

Given your current Flask stack and notification frequency requirements,

I'd recommend SSE. Should we proceed with this approach?"

Result: Human makes informed decision with full context of trade-offs.

Human: "The Docker build is failing"

AI: "Let me investigate systematically:

1. Check the build log for specific errors

2. Verify Dockerfile syntax and layer dependencies

3. Check for base image compatibility issues

4. Examine any recent changes to dependencies

Rather than adding fallback mechanisms, I'll identify the root cause first."

Process:

- Gather information before proposing solutions

- Test hypotheses systematically

- Fix root cause, not symptoms

- Document resolution for future reference

Human: "The new auth service isn't working with our existing API"

AI: "Let me trace through the integration points:

1. Authentication flow and token format

2. API endpoint expectations vs. new service output

3. Error handling between services

4. Timing and timeout configurations

I see the issue - the token format changed. Instead of adding a

compatibility layer, let's align the services properly."

Vibe Coding isn't limited to software engineers. Any domain expert can leverage their specialized knowledge to create tools they've always needed but couldn't build themselves.

Real-World Example: A veteran HR professional with decades of recruiting experience collaborated with AI to create a sophisticated interview assessment application. The human brought invaluable domain expertise—understanding what questions reveal candidate quality, how to structure evaluations, and the nuances of effective interviewing. The AI handled form design, user interface creation, and systematic organization of assessment criteria.

Result: A professional-grade interview tool created in hours that would have taken months to develop traditionally, combining lifetime expertise with rapid AI implementation.

Key Pattern:

- Domain Expert provides: Years of specialized knowledge, understanding of real-world requirements, insight into what actually works in practice

- AI provides: Technical implementation, interface design, systematic organization

- Outcome: Tools that perfectly match expert needs because they're built by experts

Our Experience: When we plan development work in one-week phases, we consistently complete approximately two full weeks worth of planned work in a single six-hour focused session.

This means: 14 days of traditional development compressed into 6 hours - roughly a 56x time compression for planned, collaborative work.

Why This Works: The combination of thorough planning, immediate AI implementation, and continuous human oversight eliminates most of the typical development friction:

- No research delays (AI has broad knowledge)

- No context switching between tasks

- No waiting for code reviews or approvals

- No debugging cycles from misunderstood requirements

- No time lost to repetitive coding tasks

- Fewer bugs: Human oversight catches issues early

- Better architecture: More time for design thinking

- Consistent code style: AI follows established patterns

- Complete documentation: Plans and decisions preserved

- Reproducible process: New team members can follow methodology

- Preserved context: Plans survive team changes

- Continuous learning: Both human and AI improve over time

- Scalable expertise: Senior engineers can guide multiple projects

- Desktop Commander: File operations, system commands, code analysis

- Claude Sonnet 4: Strategic thinking, architecture decisions, code review

- Git: Version control with detailed commit messages

- Docker: Containerization and deployment

- Python: Primary development language with extensive AI tooling

- MkDocs: Documentation and knowledge management

- GitHub: Code hosting and collaboration

- Plans folder: Context preservation across sessions

- Testing frameworks: Automated verification of changes

/project-root/

├── plans/ # Development plans and status

├── docs/ # Documentation and guides

├── src/ # Source code

├── tests/ # Test suites

└── docker/ # Deployment configurations

1. Plan → Discuss → Implement → Verify

1. Create plan in plans/ folder

2. Discuss approach and alternatives

3. Implement in small, verifiable chunks

4. Test each component before integration

2. Progressive Disclosure

- Start with high-level architecture

- Add detail as needed for implementation

- Preserve decisions for future reference

- Update plans with lessons learned

3. Human-AI Handoffs

AI: "This requires domain knowledge about your business rules.

Could you provide guidance on how customer tiers should affect pricing?"

1. The Fallback Trap

❌ "Let me add error handling to catch this edge case"

✅ "Let me understand why this edge case occurs and fix the root cause"

2. Over-Engineering

❌ "I'll build a generic framework that handles all possible scenarios"

✅ "I'll solve the immediate problem and refactor when patterns emerge"

3. Context Amnesia

❌ Starting fresh each session without reading existing plans

✅ Always begin by reviewing current state and previous decisions

4. Tool Misuse

❌ Using web search for file operations

✅ Desktop Commander for local operations, web tools for external information

For complex projects, different AI agents can handle different aspects:

- Architecture Agent: High-level design and system integration

- Implementation Agent: Code generation and refactoring

- Testing Agent: Test creation and verification

- Documentation Agent: Technical writing and knowledge capture

# Large refactor example

1. Create detailed plan with checkpoint strategy

2. Implement core changes in chunks

3. Test each checkpoint before proceeding

4. Update plan with lessons learned

5. Continue or adjust based on results

# In plans/lessons-learned.md

## Problem: Authentication integration complexity

## Solution: Standardized token format across services

## Impact: 3 hours saved on future auth integrations

## Pattern: Define service contracts before implementation

Through hundreds of hours of real development work, we've identified these critical practices that separate successful Vibe Coding from common pitfalls:

The Key Rule: No code gets written until we agree on the plan.

Impact: This single rule eliminated nearly all wasted effort in our collaboration. When AI understands the full context upfront, implementation proceeds smoothly. When AI starts coding without complete context, it optimizes for the wrong requirements and creates work that must be discarded.

Why It Works: AI doesn't know what it doesn't know. Planning forces the human to surface all the context, constraints, and requirements that AI can't infer. Once established, execution becomes efficient and accurate.

Pattern: Always choose the simplest solution that solves the actual problem.

❌ "Let me build a comprehensive framework that handles all edge cases"

✅ "Let me solve this specific problem and refactor when patterns emerge"

Why This Works: Complex solutions create technical debt and make future changes harder. Simple, targeted changes preserve architectural flexibility.

Pattern: Modify only what needs changing, preserve working functionality.

# Good: Minimal, focused change

edit_block(

file="service.py",

old="def process():\n return data",

new="def process():\n validate_input()\n return data"

)

# Avoid: Large refactoring that could break existing functionality

Why This Matters: Working software is valuable. Surgical changes reduce risk and maintain system stability.

Critical Pattern: AI should never manage lengthy operations directly.

AI: "This database migration will take 15+ minutes. I'll provide the command

and monitor approach rather than running it myself:

Command: python manage.py migrate --database=production

Monitor: Check logs every 2 minutes for progress

Rollback: python manage.py migrate --database=production 0001_initial

Would you prefer to run this yourself for better control?"

Human Advantage: Human oversight of long processes prevents resource waste and enables real-time decision making.

Anti-Pattern: "Let me add error handling to catch this edge case..."

Better Approach: "Let me understand why this edge case occurs and fix the root cause..."

❌ Fallback: Add try/catch to hide the real problem

✅ Root Cause: Investigate why the error happens and fix the source

Time Savings: Solving root problems prevents future debugging sessions and creates more maintainable code.

Rule: Nothing is complete until it's been tested and verified.

AI Implementation → Human/AI Testing → Verification Complete

↑ ↓

←——————— Fix Issues If Found ←———————————————

Testing Handoff Options:

- "Should I run the tests or would you prefer to verify?"

- "Here's what needs testing: [specific scenarios]"

- "Implementation ready for verification: [verification checklist]"

- Set up tools: Desktop Commander, AI assistant, documentation system

- Start small: Choose a well-defined feature or bug fix

- Practice patterns: Plan → Discuss → Implement → Verify

- Document learnings: Build your pattern library

- Establish standards: File organization, documentation formats, handoff protocols

- Train together: Practice the methodology on shared projects

- Create templates: Standard plan formats, common decision trees

- Measure outcomes: Track speed and quality improvements

- Development velocity: Features delivered per sprint

- Code quality: Bug rates, review feedback, maintainability

- Knowledge retention: How quickly new team members become productive

- Context preservation: Ability to resume work after interruptions

Vibe Coding represents a fundamental shift from human-as-coder to human-as-conductor. By treating AI as a powerful but directed tool rather than a replacement, we achieve:

- 10x velocity improvements without sacrificing quality

- Better architecture through increased design thinking time

- Preserved expertise via documentation and knowledge transfer

- Scalable impact where senior engineers can guide multiple projects

This methodology isn't about replacing developers—it's about amplifying their impact. Senior engineers become force multipliers, able to execute complex projects that would normally require entire teams.

The companies that master human-AI collaboration today will dominate tomorrow's software landscape. Vibe Coding provides a proven framework for making that transition successfully.

Ready to Start? Begin with a small, well-defined feature in your current project. Apply the Plan → Discuss → Implement → Verify cycle. Set up a /plans folder in your project and create your first development plan using the methodology outlined here.

Want to learn more? Contact us to discuss how Vibe Coding can transform your development process, or explore our Services for enterprise implementations.

To demonstrate this methodology in practice, here's an actual session from our development work. The context: we were working in Claude Desktop with system prompts providing project structure information and collaboration preferences.

Human: "we could also add a token counter somewhere in the lit-desktop UI ... that's the kind of project that is super clear to a reader what we're after"

AI: "Perfect! A token counter in lit-desktop is an excellent demonstration project. It's:

- Crystal clear goal: Everyone understands what a token counter does

- Visual result: Immediate, tangible outcome users can see

- Right scope: Substantial enough to show methodology, small enough to complete

- Real value: Users actually want to track token usage"

Human: "i think it's per conversation. if you recall how you and I work here in claude desktop there's always this point about an hour into any project and we can both tell when it's approaching. i don't know how you know but I can tell by the size of the scroll bar..."

AI: Created detailed implementation plan covering:

- Technical decisions (client-side approximation vs server-side)

- UI placement (near text input for pre-send awareness)

- Update frequency (200ms debounce)

- Data flow (conversation total + live draft)

Before writing any code, we examined the existing lit-desktop structure:

- Located Angular chat components

- Found message input textarea with

[(ngModel)]="currentInput"

- Identified

messages: ChatMessage[] array for conversation data

- Determined optimal display location in status hint area

Service Creation: Built TokenCounterService with approximation algorithm

countTokens(text: string): number {

const charBasedTokens = Math.ceil(text.length / 4);

const wordBasedTokens = Math.ceil(text.trim().split(/\s+/).length / 0.75);

return Math.round((charBasedTokens + wordBasedTokens) / 2);

}

Component Integration: Added token counting to chat page component

- Injected service into existing constructor

- Added properties for conversation and draft token counts

- Integrated with message loading and session switching

Template Updates: Modified HTML to display counter

<span *ngIf="!isLoading">

<span>Lit can make mistakes</span>

<span *ngIf="tokenCountDisplay" class="token-counter"> • {{ tokenCountDisplay }}</span>

</span>

Browser Compatibility Issue: Initial tokenizer library required Node.js modules

- Problem:

gpt-3-encoder needed fs/path modules unavailable in browser

- Solution: Replaced with custom approximation algorithm

Duplicate Method: Accidentally created conflicting function

- Problem: Created duplicate onInputChange method

- Solution: Integrated token counting into existing method

Human: "are we capturing the event for switching sessions?"

This caught a gap in our implementation - token count wasn't updating when users switched conversations. We added the missing updates to selectChat() and createNewChat() methods.

Human: "instead + tokens how about what the new total would be"

UX improvement suggestion led to cleaner display format:

- Before: "~4,847 tokens (+127 in draft)"

- After: "~4,847 tokens (4,974 if sent)"

Development Outcome: Successfully implemented token counter feature across 4 files with clean integration into existing Angular architecture.

Timeline (based on development session):

- 14:30 - Planning and architecture discussion complete

- 14:32 - Service implementation with token approximation algorithm

- 14:34 - CSS styling for visual integration

- 14:36 - HTML template updates for display

- 14:40 - Component integration and event handling

- 14:40-15:00 - Human stuck in meeting; AI waits

- 15:03 - UX refinement and improved display format

- 15:04 - Final verification and testing

Total active development time: 14 minutes (including planning, implementation, and verification)

The session shows core Vibe Coding principles in action:

- Human strategic direction: Clear problem definition and UX decisions

- AI tactical execution: Architecture research and rapid implementation

- Continuous verification: Testing and validation at each step

- Quality collaboration: Human oversight caught integration gaps and suggested UX improvements

- Surgical changes: Modified existing architecture rather than building from scratch

- Context preservation: Detailed planning enabled seamless execution

The development session demonstrates how Vibe Coding enables complex feature development in minutes rather than hours, while maintaining high code quality through human oversight and systematic verification.

Want to experience Vibe Coding yourself? Contact our team to discuss how Vibe Coding can transform your development process.

Ben Vierck is a senior software architect and CTO at JOV.AI, with over a decade of experience leading engineering teams at Fortune 100 companies. He developed the Vibe Coding methodology through hundreds of hours of real-world collaboration with AI development assistants while building production systems and open source projects.

Tags: AI Development, Human-AI Collaboration, Software Engineering, Development Methodology, Productivity, AI Tools, Enterprise Development