92% of Nonprofits Use AI. Only Half Have a Policy. Here's What One Foundation Built.#

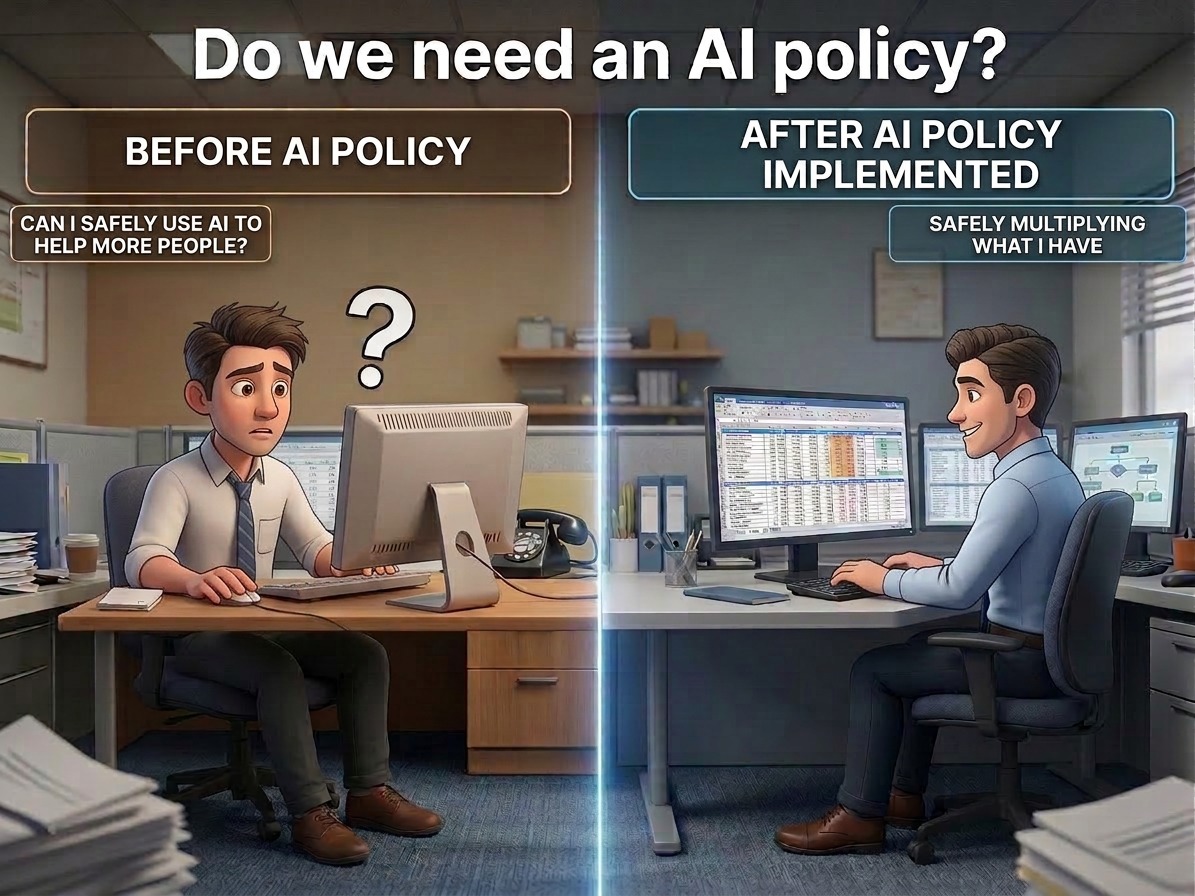

Your staff is already using AI. You probably know that. What you might not know is which tools, on which data, with what guardrails.

The 2026 Nonprofit AI Adoption Report put a number on it: 92% of nonprofits are using AI in some capacity. But 47% have no governance policy at all. And 81% are using AI individually, no shared workflows, no documentation, no organizational learning.

That's not an AI problem. That's a risk management problem hiding in plain sight.

At the end of last year, we helped The Catholic Foundation in Dallas build an AI governance framework from scratch. Policy, training, board approval, the whole thing. Here's what the process looked like and what we learned doing it.

The Problem Isn't AI. It's What You Don't Know About.#

The risk that keeps me up at night for organizations like this isn't a sophisticated cyberattack. It's a well-meaning staff member pasting sensitive information into a free AI tool to draft an email.

That's not hypothetical. Last July, IBM's Cost of Data Breach Report found that one in five organizations experienced a breach tied to shadow AI: tools employees use without IT approval. Those breaches cost an average of $670,000 more than standard incidents. UpGuard confirmed what we see on every engagement: 81% of employees are already using unapproved AI tools at work. Including the security professionals.

For foundations built on donor trust, "we didn't know" is not a sufficient answer. Neither is "we're working on a policy."

What the Process Actually Looks Like#

When The Catholic Foundation reached out, they weren't reacting to an incident. They were getting ahead of one. AI features were showing up in the tools their team already used, whether anyone asked for them or not. People wanted to use AI the right way. They just didn't have a playbook. Leadership decided to build the framework before that ambiguity became a problem.

Here's what we mapped out in a few weeks:

Data classification. Not every piece of information carries the same risk. The work starts with drawing clear lines: what never touches an AI tool under any circumstances, what can be used with explicit approval, and what's fair game. The test is simple: if it would be devastating on the front page of a newspaper, it stays out of AI completely.

Tool evaluation. Not all AI tools are created equal. Enterprise tools with contractual data protection agreements are fundamentally different from free consumer tools that may use your data for training. The policy needs a clear approved and prohibited list.

Staff training. Not a lecture about AI theory. Scenario-based: "This situation just came up. What do you do?" The questions that surface are the kind you can't anticipate from a desk: AI features appearing unprompted in existing software, third parties on calls running AI recorders, voice assistants on personal phones.

Board alignment. The governance committee reviewed the policy before the full board. Their board brought the right questions and the experience to evaluate the framework on its merits. The full board approved it, no revisions needed.

The core framework was built in weeks. Review and board approval added a few months to the calendar, but that's governance working the way it should. No dedicated AI team required. No year-long compliance project. Just a decision to be intentional about it.

Why This Matters Beyond One Foundation#

Last September, CEP confirmed what we were already seeing: almost two-thirds of foundations and nonprofits are using AI, but data security remains the top concern among foundation leaders, cited by more than 80%. But concern alone doesn't build a framework.

Foundations won't get forced into this conversation by strategy. They'll get forced into it by a board question, a compliance review, or a staff member asking what's allowed.

The Catholic Foundation won't be explaining why they don't have a policy. They'll be pointing to the one they built.

Start With Three Questions#

If you lead a foundation or nonprofit, here's where the work begins:

- What information does your organization handle that would be catastrophic to expose?

- What AI tools are your staff using right now, with or without your knowledge?

- Do you have a written policy that answers question two in light of question one?

If the answer to question three is no, that's the gap. And closing it doesn't require a dedicated AI team or a six-figure consulting engagement. It requires a decision to be intentional about how your organization uses AI before the decision gets made for you.

If you want to talk through what a nonprofit AI governance policy looks like for your organization, not a sales pitch, just a straight conversation about your situation, reach out. We'll tell you if you need a formal policy yet. And if you do, we'll show you exactly where to start.

Sources: - 2026 Nonprofit AI Adoption Report, Virtuous/Fundraising.AI, February 2026 - CEP "AI With Purpose" Report, September 2025 - IBM 2025 Cost of Data Breach Report, July 2025 - UpGuard "State of Shadow AI" Research, November 2025